Ming-Yang Yu, Po-Kuang Chen: eco-echo

Artist(s):

Collaborators:

- Bing-Yu Chen

- Yu-Jen Chen

- Meng-Chieh Yu

- Hsi Ji Chung

- Jen-Yuan Chiang

- Chien-Ling Tang

- Jack Hsieh

- Ming Ouhyoung

Title:

- eco-echo

Exhibition:

Creation Year:

- 2007

Category:

Artist Statement:

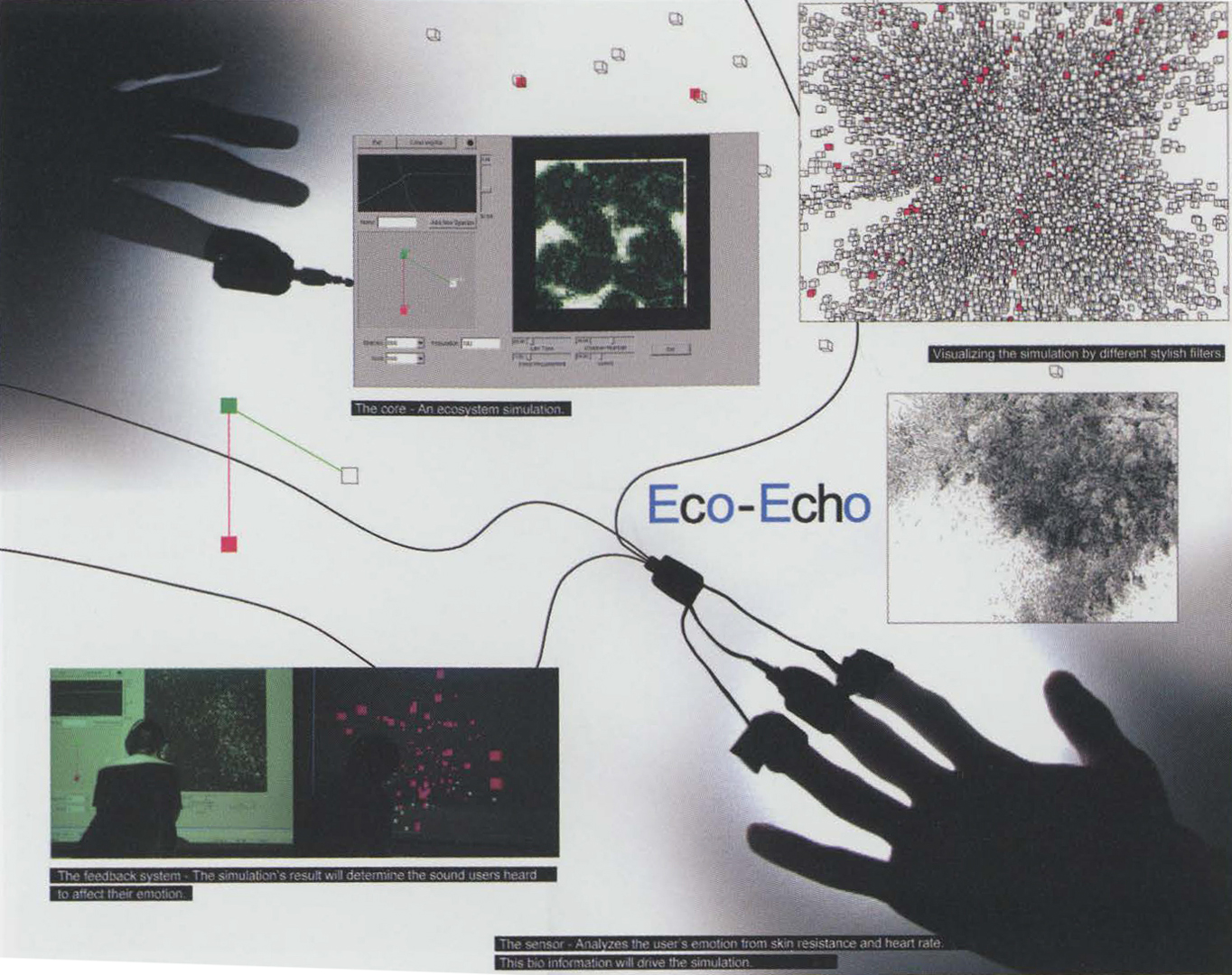

“Heaven, earth, and I are born of one, and I am at one with all that exists,” said Chuang-tzu, an ancient Chinese philosopher. In his thinking about Taoism, humanity and nature are inseparable. Every human activity has repercussions. To visualize this traditional Chinese thought with a modern approach, we developed an “ecosystem simulation.” This simulation contains two worlds, virtual and real. In the virtual world, human beings determine how the world develops. For example, all the creatures’ behavior in the virtual world is controlled by the viewer’s emotional response. The creatures’ behavior is displayed on the screen with sound, and this can change the viewer’s feelings in the real world. The viewer plays a double character: a member of the real world and a player in the virtual world. Although the process is composed by hardware and software, “human ware” is the essential element of this process. Using sensors and speakers as media, the viewer is a conductor of both the virtual world and the real world. The viewer can also be a producer who provides spiritual power to lead changes in the ecosystem. And the viewer receives feedback from the system. This endless cycle is just as Chuang-tzu said: “Heaven, earth, and I are born of one.”

Technical Information:

This system includes three components:

1. The core is an ecosystem simulator. In the virtual world, each creature has its own parameters: life length, food requirements, speed of motion, etc. All creature behaviors simulate the real world. They breed, prey, propagate, and die, and these processes are visualized in an animation

that shows the creatures through various filters controlled by the viewer.

2. The sensors detect the viewer’s heart rate and skin resistance, which reveal the intensity of the viewer’s emotional excitation, whch acts as an essential parameter in the feedback system.

3. The feedback system acts as a bridge between the viewer and the ecosystem. The viewer’s emotional state changes the simulation, and the simulation generates feedback to affect the viewer’s emotion with different sounds.